From bestsellers to delisting, the publishing giant fires the first shot in the 'anti-AI' campaign

On March 19, 2026, Hachette Publishing Group announced the complete removal of the horror novel "Shy Girl" due to controversies over AI-generated content, halted its US release plan, and withdrew the English edition from sale, becoming the first major publishing group to cancel the distribution of a purchased copyright book on suspicion of AI creation. This upheaval not only shattered the reputation of the once wildly popular online book but also turned the impact of AI on the content industry from a risk into a reality.

From Social Media Fame to Publisher Withdrawal

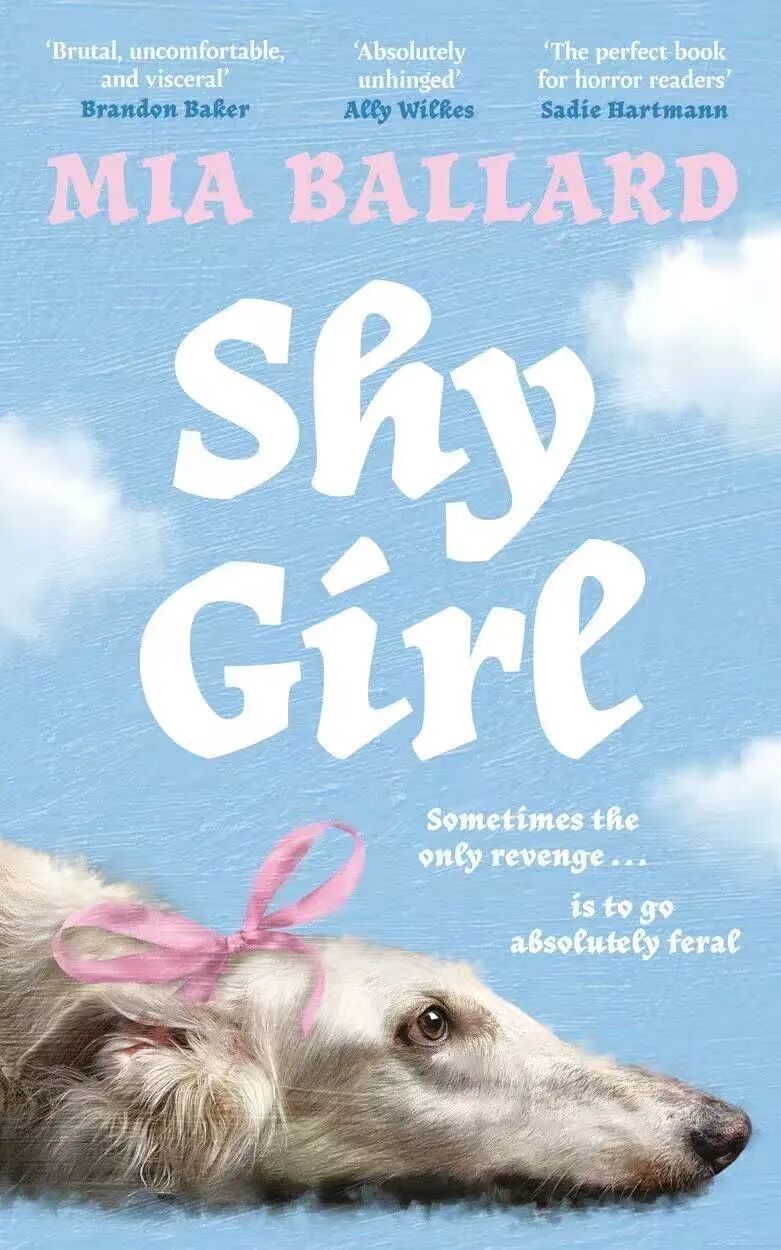

Shy Girl initially became popular through social media but eventually fell out of favor amid widespread online criticism. Shy Girl tells the story of a desperate young woman who is imprisoned by a man she met online and forced to act as his pet. In February 2025, the author Mia Ballard self-published this horror novel. With its provocative subject matter and plot, the novel went viral on BookTok, receiving nearly 5,000 reviews and a 3.52-star rating on Goodreads, and was subsequently picked up by the Hachette Publishing Group. It was officially released in the UK in November 2025, with print sales reaching 1,800 copies, and was originally scheduled for release in the US in May 2026.

As the reach of this book expanded, some attentive readers and publishing professionals noticed traces of AI generation in the work. In January 2026, a book editor who claimed to have 12 years of experience posted a long article on Reddit, pointing out that its writing style was indistinguishable from that of LLM (large language model) writing, such as excessive use of adjectives, highly repetitive sentence structures and expressions, and overuse of hyphens. Shortly after, a nearly three-hour-long YouTube analysis video was released, with the title bluntly stating, "I'm almost certain this book is a poorly written AI-generated work," providing a detailed breakdown of features in the novel suspected of being AI-generated. The video surged to over 1.2 million views, bringing the controversy to its peak. Professional detection results further confirmed the dispute. Max Spero, founder and CEO of AI detection company Pangram, stated after testing that 78% of the book's content was AI-generated. Additionally, netizens analyzed two other works by Mia Ballard, revealing that most of the content was AI-generated as well. Max Spero also sharply commented, "Obviously, even if this isn't entirely written by AI, AI completed a very large part of it." Public opinion then reversed, with one-star reviews quickly increasing on Goodreads, accusing the book of being written by ChatGPT.

After the New York Times presented Hachette with evidence on March 19 that the novel was suspected of being AI-generated, the novel was removed from Amazon and Hachette's official website that afternoon. The next day, the company said it would cancel the book's publication plans in the United States and stop selling the book in the UK. A spokesperson for Hachette Publishing said the company is always committed to preserving original creative expression and storytelling. He added that Hachette requires all submitted works to be original by the author and implores authors to disclose to the company whether they use artificial intelligence tools in the writing process.

But Mia Ballard firmly denied the use of AI in an email sent to the New York Times late Thursday night, blaming the editor who handled the self-published version for all the problems, claiming that the editor used AI without authorization in her work, and bluntly said that "such a controversy has ruined my reputation, and my mental state has hit rock bottom", and said that legal action has been taken against the editor. However, this statement was not recognized by the industry and netizens, and Thad McIlroy, a consultant in the publishing industry, said bluntly: "This incident confirms the AI risks that the industry has long predicted, and the impact of AI on the publishing industry has changed from theory to reality." "

The impact of this incident goes far beyond the removal of a book, which Hachette has invested in upfronts, editorial resources, marketing planning and cover design for "Shy Girl" before deciding to remove the book. Even if these sunk costs have been incurred, publishers still believe that the consequences of continuing to publish are more serious than the loss of these costs. This decision reflects the publisher's emphasis on the trust of readers and the author community, as well as the long-term brand reputation. In a book market driven by trust, the potential damage of publishing a novel suspected of being AI-generated far outweighs the short-term financial loss of canceling the distribution.

The crux of the industry behind the turmoil

The removal of "Shy Girl" is not an isolated case, and in the context of an industry with high AI penetration, the crux of the publishing industry has been exposed very early. Since the advent of ChatGPT in 2022, various generative AI tools have continued to iterate and become new tools for content production. Self-publishing platforms such as Amazon Kindle Direct Publishing have become the hardest hit areas of AI content, with low publishing thresholds for self-publishing platforms, AI-generated content can be quickly written and put on the shelves here, and a large number of AI-generated works such as science fiction works, practical reference books, and children's books have been put on the shelves, making the content industry fall into the dilemma of "high yield and low quality" and content homogenization. More importantly, the management policies of such platforms for AI-generated content are ambiguous, resulting in a large influx of AI-assisted or pure AI-generated content, making self-publishing platforms a springboard for AI text to pour back into traditional publishing. In recent years, in order to reduce the risk of topic selection and tap market potential, traditional publishing institutions tend to screen works that have been verified by the market from self-publishing platforms, and American publishers rarely make significant changes to the acquired self-published works, which also lays hidden dangers for the publishing industry.

This incident has exposed the lack of content censorship in traditional publishing. Traditional publishing relies on the subjective experience of editors, which often fails to distinguish between human and AI-written text, especially those that retain the original content but have been processed by AI. As a leading publishing group with a history of 200 years, Hachette has a professional editorial team and a mature review process, but it still fails to identify the traces of AI in "Shy Girl" during multiple rounds of review. Moreover, the dual problems of "false positives" and "false negatives" of AI detection tools introduced in the industry are prominent, and the text detector launched by OpenAI has been removed from the shelves due to low accuracy, and there is currently no unified technical standard that can provide reliable detection data for the publishing industry. Failed manual review and unreliable technical detection have put the publishing industry in an embarrassing situation where it cannot accurately identify AI-generated content.

The fundamental problem exposed by similar incidents is mainly the lag of industry rules and legal systems. Most publishing contracts only stipulate plagiarism and ownership of rights, and do not clearly define the ownership of AI-generated content, nor do they specify the degree of use of AI in the creative process and whether to disclose it. At the legal level, although the State Council's 2025 Legislative Work Plan proposes to "promote the legislative work of promoting the healthy development of artificial intelligence", special laws have not yet been promulgated, and key issues such as copyright ownership of AI-generated content and infringement judgment of AI use lack legal basis.

AI is not the original sin

The removal of Shy Girl does not mean that the publishing industry or even the content industry should reject AI technology.

There is no good or evil in technology, and the key is how content creators and publishers plan the boundaries of use. The value of generative AI for publishing has long been recognized by the industry, and its application in material sorting, topic selection, manuscript proofreading, typesetting design and other links has significantly optimized the content production process and improved publishing efficiency, which is also the original intention of the industry to explore the human-machine integration creation model. Publishers should shoulder the responsibility of gatekeepers in the process of technology empowerment, so that AI can become an auxiliary tool rather than a creative subject. AI may be able to follow text logic, but human creation combines text logic and emotional logic, and its thoughts and temperatures are difficult for AI to simulate. As a content gatekeeper, editors play a role in content screening, deep processing, and value control that is difficult for AI to replace.

Because of this, in the face of the impact of AI technology, the publishing industry has begun to try to actively improve the norms. Penguin Random House, Simon & Schuster, and HarperCollins have all updated their submission guidelines in recent months to deal with AI-generated content, and literary agencies such as Greene & Heaton and Eve White Literary Agency have added clauses to their submission guidelines, urging authors not to use AI in their submission materials. Perhaps Hachette's move will accelerate the industry-wide policy-making process.

The removal of "Shy Girl" represents a setback for the publishing industry in the AI era, but it also forces the industry to face the issues head-on, moving discussions about artificial intelligence from theoretical talk to concrete contracts and legal formulations. Its impact will gradually permeate all aspects of copyright negotiations, contract discussions, and the establishment of editorial systems. At present, many publishing practitioners have also begun actively calling for the national government to accelerate the legislative process for artificial intelligence, providing clear legal boundaries for AI applications in publishing. Publishers urgently need to develop reliable AI content detection methods, establish clear rules for disclosing AI usage, and improve AI-related clauses in contracts, so that industry practices have regulations to follow. For authors, the publishing industry is not yet ready to fully accept AI-generated content, and disclosure of information during the creative process will become one of the requirements for future publishing collaborations.

An even more important issue is that, with AI detection technology still imperfect and currently relying only on authors' conscientiousness, whether publishers can truly implement new standards, and whether disputes arising from AI-generated content will continue to occur in the future, remain a Damoclean sword hanging over the publishing industry.